The true delight is in the finding out rather than in the knowing.

-- Isaac Asimov

Introduction

It's hard to automate testing for graphics programming, most of the times you are looking for something that looks nice or good and you are forced to rely on just eyeballing it. Worse, you might need an artist to take a look at it, since programmer art is a fickle thing. There are some things that you can automate though and the fact that the computer is a cool analytic machine that has no concept of what looks good or bad is actually working to our advantage.

Python Image Library

The python image library brings really powerful tools into your arsenal if you program your little utility scripts in python. The thing here is that you can first do a binary diff on the actual files without knowing what they are, and then bring them in to do some additional processing if you so like. Most of the time the binary diff should just return that it's all ok, but otherwise it might be a good idea to drag the images through some kind of difference process. PIL makes it very easy for us:

import Image import ImageChops import hashlib dataA = open(filenameA, 'rb').read() dataB = open(filenameB, 'rb').read() if hashlib.md5(dataA).hexdigest() != hashlib.md5(dataB).hexdigest(): imageA = Image.open(filenameA) imageB = Image.open(filenameB) diff = ImageChops.difference(imageA, imageB) diff.save( diffFilename )

Ok, there is a little bit of slop here, we load the images twice potentially, we could easily have just done the MD5 on the pixeldata itself, although then we would have paid the cost of always decoding the images. It turns out while the python solution is quite quick to put together, it's kind of slow. So we search for faster alternatives.

Perceptual Diff

Of course, the Internet contains all sorts of things and you bet if you've thought of it, there is an implementation out there. It turns out that Hector Yee already wrote a program for this called Perceptual Diff. I had some minor stuff that I wanted to change, the great thing about open source is that I actually have the source right there... so the return value is a non unix standard 1 upon success (i.e. no difference), which I just wanted to have as 0. The dependencies upon libtiff and libpng were a pain so I just rewrote the IO routines to use the most excellent library freeimage. I've been using freeimage since 2005, that was when we finally dumped Devil (yes it really was the devil, openGL syntax for image loading, what the hell were they thinking?).

The program is kind of slow right now on rather small images, which leads me to believe that there might be some optimizations to be made. It might not be such a big deal on an occasional image, but when you batch compare dozens of images, it will all add up. Hm, might be a good excuse to get my feet wet with some CUDA programming...

I've packaged up the binaries of this version in a simple little zip file, with the dependencies on FreeImage in there as well. This zip is all you need to start compiling your own version if you'd like.

msbuild PerceptualDiff.sln /p:Configuration=Release

vcbuild PerceptualDiff.sln "Release|Win32"

Imagediff

One really desirable thing is to have a fast diff between images. I'm not really interested in how the difference is calculated (ok, not really true), but it needs to be really fast for all these HD images we want to compare. Both the python solution and perceptual diff takes significant time actually. For perceptual diff, here is the output from a sample run:

C:\Temp\diffarticle>pdiff hd_white1.png hd_white2.png -output perceptual\diff1.png -verbose Field of view is 45.000000 degrees Threshold pixels is 100 pixels The Gamma is 2.200000 The Display's luminance is 100.000000 candela per meter squared Loading took 79.3 ms Converting RGB to XYZ Constructing Laplacian Pyramids Performing test Comparing took 5962.1 ms FAIL: Images are visibly different 5765 pixels are different Wrote difference image to perceptual\diff1.png

As you can see, the actual comparison took almost 6 seconds! That's of course on my slightly slow 2.4GHz Quad Core machine (of course just one core is occupied). That's a long time to wait though. It probably seemed more time that it really was, I just kind of noticed it as I was running a lot of these compares. It annoyed me so much that I figured that I'd write the simplest, quickest and dirtiest little program to see how bad it could be? A little while later (and two cokes) I got a small program done. Here is the runtime for that other tool:

C:\Temp\diffarticle>imagediff hd_white1.png hd_white2.png mydiffs\diff1.png -v Load source images took 80.8 ms Images differ Perform diff took 4.2 ms Save diff image took 96.1 ms

That is what I would expect, the IO operations are dominating the time. As you can see, the 4.2ms to process an image is kind of bad, my code was not anything fancy. If you need a comparison, consider the postscreen filters on a regular graphics card need to basically do this as well, combining two images into one.

So this is a case of comparing apples to oranges, they are both fruits but they taste quite differently. Perceptual Diff does have some metric to the madness that it uses to report fuzzy errors.

My imagediff program simply steps through and compares the color values to each other, the inner loop of that is extremely simple, heck here it is:

xorDiff: movdqa xmm0, XMMWORD PTR [eax] movdqa xmm1, XMMWORD PTR [ecx] movdqa xmm3, xmm0 pxor xmm3, xmm1 movdqa XMMWORD PTR [edx], xmm3 add eax, 16 add ecx, 16 add edx, 16 sub esi, 1 psadbw xmm0, xmm1 por xmm2, xmm0 jne SHORT xorDiff

That's just using the standard visual studio compiler, with the intrinsics available there.

Comparison outputs

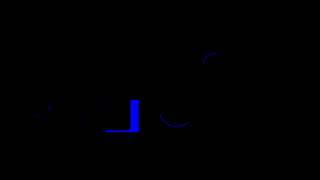

So what happens with perceptual diff when you output difference images? Well, if you download the sample images I've created, it looks like it simply outputs some reference values where things differ. This is fine in most cases.

The images I've created on the other hand, are much simpler. The imagediff program has two modes, an xor mode and an absdiff mode. The xor mode simply performs xor on the pixels and the absolute diff simply calculate the absolute difference between the two images. Since images do convey much more than words, here are the (scaled) source images and then the difference images.

Source images

I cobbled together some source images that I could use for the diff program comparison. Here they are listed one by one.

So now that we've seen the source images I've used, let's take a look at the differences generated by the two programs.

Again perceptual diff

I'm running the patched perceptual diff from the latest source (1.0.1 as of writing). These are the output images:

Again Image Diff

These differences have been made with the image diff program I wrote.

In closing

Comparing images is quite handy sometimes. Very subtle changes can be introduced by changing the light parameters and gamma correction calculations that are very hard to see with the naked eye, even if you have both images side by side. Having the computer dispassionately tell you that the images are not equal to each other can reveal surprising effects to code changes. Having a tool that can automate this task is kind of handy. Depending upon your needs you might go with the simplest solution possible, an already existing tool. Otherwise you could roll your own in python or just write a program yourself. It takes minimal time and think of all the time you save on the subsequent runs!

Resources

- My patched Perceptual Diff with binaries and source code.

- My little image diff tool.

2008-07-07: Update

The FreeImage patch to perceptual diff has been applied to the main line of source code on sourceforge.